前言

欢迎大家来到我的博客,请各位看客们点赞、收藏、关注三连!

欢迎大家关注我的知识库,Java之从零开始·语雀

你的关注就是我前进的动力!

CSDN专注于问题解决的博客记录,语雀专注于知识的收集与汇总,包括分享前沿技术。

主体

需求

因为要做elk日志,并且要在页面上展示效果,故要做一个分页的效果。

使用的依赖过低,但是又无法升上去。以至于count命令无效,执行就报错!

想过做表格懒加载,但是百度了一下,都跟那个总数有关系。

可关键是我就是查不出总数,懒加载就显得毫无意义。

又想过百度、谷歌都是分页显示最多73页,我也这么搞。

现实却是只有几页时,下面的分页数还是有73个选项,也就是说这玩意也需要总数。

没办法!只能谷歌。

首先找到了几个思路的链接:

https://blog.csdn.net/zhuchunyan_aijia/article/details/122901472

https://facingissuesonit.com/2017/05/10/elasticsearch-rest-java-client-to-get-index-details-list/

只要可以执行命令,那这一切就简单了。

<!--elasticsearch-->

<dependency>

<groupId>org.elasticsearch.client</groupId>

<artifactId>elasticsearch-rest-high-level-client</artifactId>

<version>6.4.3</version>

</dependency>

java实现

java"> public EsPageInfo<List<GxptApiCallLog>> queryPage2(String params) {

JSONObject jsonObject = JSONObject.parseObject(params);

String pageIndex = jsonObject.getString("pageIndex");

String pageSize = jsonObject.getString("pageSize");

// 条件搜索

SearchSourceBuilder builder = new SearchSourceBuilder();

MatchAllQueryBuilder queryBuilder = QueryBuilders.matchAllQuery();

//封装查询参数

builder.query(queryBuilder);

// 结果集合分页,从第一页开始,返回最多十条数据

builder.from(Integer.parseInt(pageIndex) - 1).size(Integer.parseInt(pageSize));

//排序

builder.sort("_id", SortOrder.DESC);

RestHighLevelClient client = ElasticSearchClient.getConnect();

RestClient lowLevelClient = client.getLowLevelClient();

IndexInfo[] indexArr = null;

int count = 0;

try {

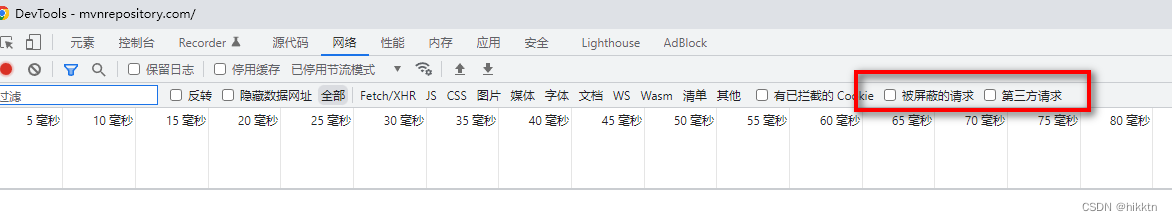

Response response = lowLevelClient.performRequest("GET", "/_cat/indices/myIndex-*?format=json",

Collections.singletonMap("pretty", "true"));

HttpEntity entity = response.getEntity();

ObjectMapper jacksonObjectMapper = new ObjectMapper();

indexArr = jacksonObjectMapper.readValue(entity.getContent(), IndexInfo[].class);

count = Arrays.stream(indexArr).map(IndexInfo::getDocumentCount).mapToInt(Integer::parseInt).sum();

//for(IndexInfo indexInfo:indexArr) {

// System.out.println(indexInfo);

// indexInfo.getDocumentCount()

//}

} catch (IOException e) {

throw new RuntimeException(e);

}

// 执行请求

SearchResponse response = searchDocument(client, GateWayConstants.ES_ANTU_LOG, builder);

// 返回的具体行数

SearchHit[] searchHits = response.getHits().getHits();

List<GxptApiCallLog> apiCallLogsList = new ArrayList<>();

for (SearchHit searchHit : searchHits) {

String sourceAsString = searchHit.getSourceAsString();

GxptApiCallLog gxptApiCallLog = JSON.parseObject(sourceAsString, GxptApiCallLog.class);

apiCallLogsList.add(gxptApiCallLog);

}

//String json = JSON.toJSONString(apiCallLogsList);

EsPageInfo<List<GxptApiCallLog>> esPageInfo = new EsPageInfo<>();

esPageInfo.setCurrentPage(Integer.parseInt(pageIndex));

esPageInfo.setTotalItems(count);

esPageInfo.setItemsPrePage(Integer.parseInt(pageSize));

esPageInfo.setTotalPages(count / Long.parseLong(pageSize) + (long)(count % Long.parseLong(pageSize) != 0L ? 1

: 0));

esPageInfo.setItems(apiCallLogsList);

return esPageInfo;

}

/**

* 索引高级查询

* @param indexName

* @param source

* @return

*/

public SearchResponse searchDocument(RestHighLevelClient client, String indexName, SearchSourceBuilder source){

//搜索

SearchRequest searchRequest = new SearchRequest();

searchRequest.indices(indexName);

searchRequest.source(source);

try {

// 执行请求

SearchResponse searchResponse = client.search(searchRequest, RequestOptions.DEFAULT);

return searchResponse;

} catch (Exception e) {

//log.warn("向es发起查询文档数据请求失败,请求参数:" + searchRequest.toString(), e);

e.printStackTrace();

throw new RuntimeException(e);

}

}

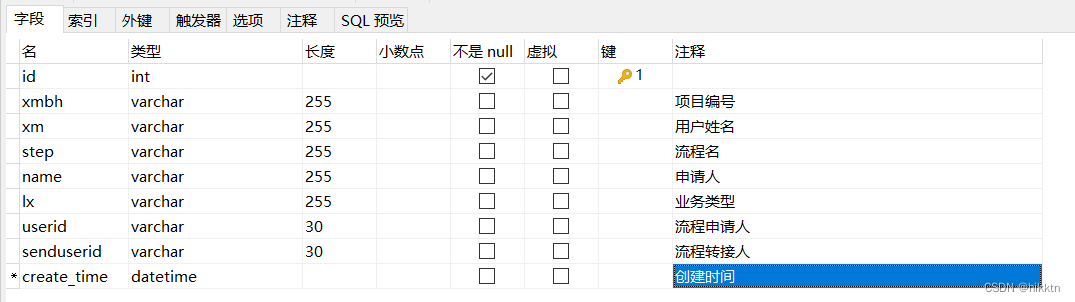

java">import com.fasterxml.jackson.annotation.JsonIgnoreProperties;

import com.fasterxml.jackson.annotation.JsonProperty;

@JsonIgnoreProperties(ignoreUnknown = true)

public class IndexInfo {

@JsonProperty(value = "health")

private String health;

@JsonProperty(value = "index")

private String indexName;

@JsonProperty(value = "status")

private String status;

@JsonProperty(value = "pri")

private int shards;

@JsonProperty(value = "rep")

private int replica;

@JsonProperty(value = "pri.store.size")

private String dataSize;

@JsonProperty(value = "store.size")

private String totalDataSize;

@JsonProperty(value = "docs.count")

private String documentCount;

public String getIndexName() {

return indexName;

}

public void setIndexName(String indexName) {

this.indexName = indexName;

}

public int getShards() {

return shards;

}

public void setShards(int shards) {

this.shards = shards;

}

public int getReplica() {

return replica;

}

public void setReplica(int replica) {

this.replica = replica;

}

public String getDataSize() {

return dataSize;

}

public void setDataSize(String dataSize) {

this.dataSize = dataSize;

}

public String getTotalDataSize() {

return totalDataSize;

}

public void setTotalDataSize(String totalDataSize) {

this.totalDataSize = totalDataSize;

}

public String getDocumentCount() {

return documentCount;

}

public void setDocumentCount(String documentCount) {

this.documentCount = documentCount;

}

public String getStatus() {

return status;

}

public void setStatus(String status) {

this.status = status;

}

public String getHealth() {

return health;

}

public void setHealth(String health) {

this.health = health;

}

}

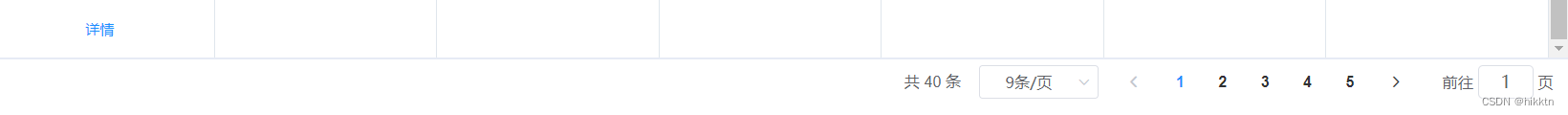

最终效果: